The Intentional Organisation - Issue #50 - The Productivity Numbers

👉 On the curious gap between what AI can do and what AI is doing.

1. The Productivity Numbers

Last issue I promised the productivity numbers, and pointed at Anthropic’s labour-market paper as the place where the evidence is starting to consolidate. Two weeks on, I want to do that piece of work properly, because the numbers turn out to tell a more interesting story than the headline summaries suggest — and the story is, again, mostly about design.

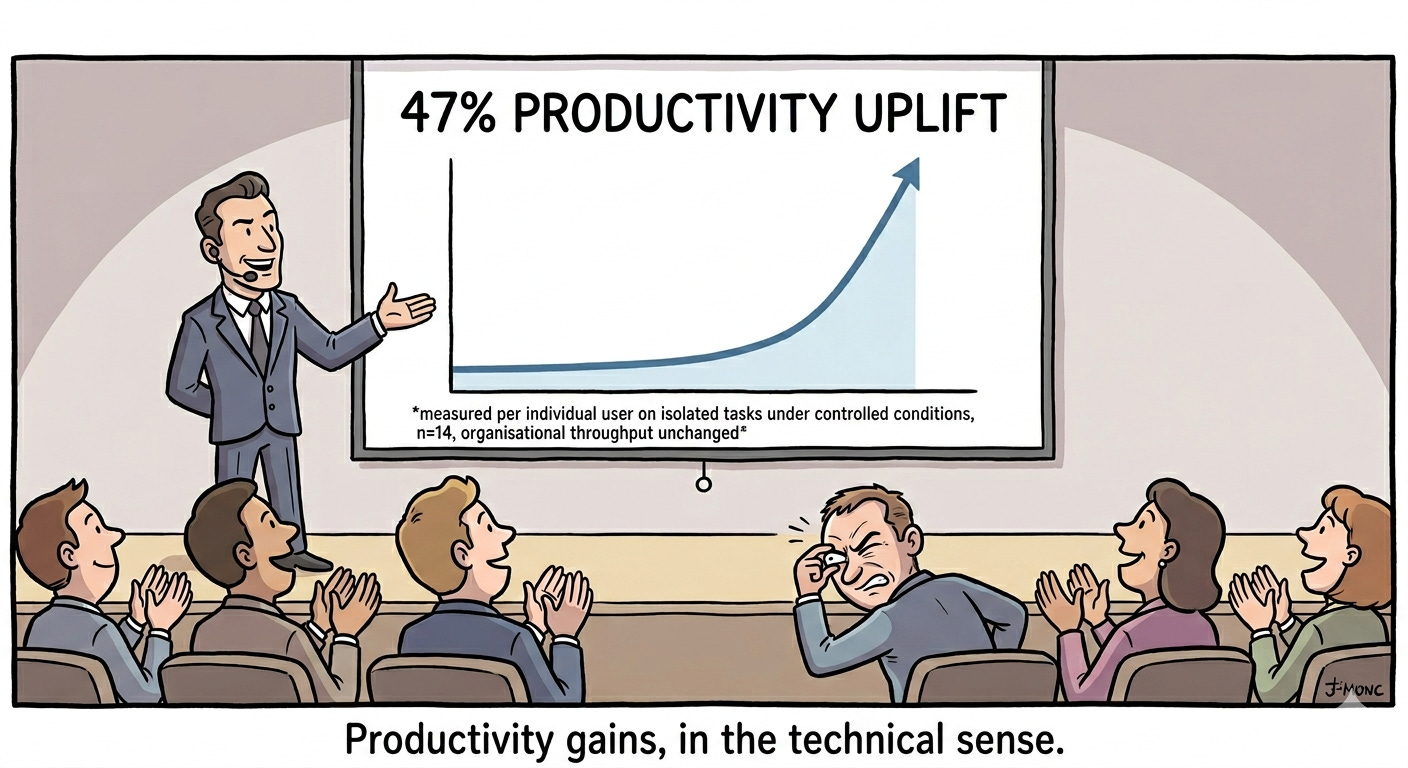

I should say this before going further: I’m writing this sitting through the same vendor pitches everyone else is sitting through. Decks full of double-digit Productivity gains, case studies that never quite specify the comparison group, ROI calculations resting on assumptions you cannot interrogate without the speaker getting visibly impatient. I am not against the technology. I’m sceptical of the numbers, and the longer I look at the academic evidence the more I think the scepticism is the responsible reading.

What the macro economists actually say

Start with Daron Acemoglu, who is not a sceptic by temperament so much as by arithmetic. His simple macroeconomics of AI estimates that AI will add no more than around two-thirds of a percent to total factor productivity over a decade — and on the paper’s own more conservative reading, closer to half a percent. A “nontrivial but modest” effect, in his phrase. Multiply the share of tasks that are AI-exposed by the share of those that are economically automatable and by the cost saving per automated task, and the macro number that falls out is close to a rounding error.

You can argue with the parameters — Goldman Sachs and McKinsey both do, with cheerier results — but the structure of the argument is hard to escape. AI does not transform every task in every job. It transforms some tasks in some jobs. The macro impact is a function of how many tasks, how much saving, how widely deployed, and the answer keeps coming back as “less than the deck implied”.

What Anthropic’s own data shows

This is the piece that landed in March and that I keep returning to. Anthropic’s economics team published a study using actual usage data from Claude — not surveys of intent, not vendor projections, but observed work being done — and built a measure they call observed exposure. The trick is the contrast. Theoretical exposure tells you what AI could do. Observed exposure tells you what AI is actually being used to do. The two diverge, and they diverge sharply.

The headline result is that observed exposure is a fraction of theoretical exposure across most occupational categories. In business and finance, in management, in computer and mathematical roles — the categories where vendor decks are most aggressive — theoretical coverage runs above 80% but actual usage is dramatically lower. The capability is there. The work, mostly, is not flowing through it.

What I find striking about this paper is who published it. This is Anthropic — a frontier lab with every commercial incentive to talk up adoption — telling the market that the gap between what their tool can do and what it is being used to do is wide and persistent. It’s not a sceptic’s paper presented as a believer’s. It’s a believer’s paper that happens to confirm what the sceptics have been saying all along.

Brynjolfsson’s J-curve, which is the mechanism

If you want to understand why observed exposure trails theoretical, Erik Brynjolfsson and his collaborators have been giving the same answer for the better part of a decade. General-purpose technologies, they argue, require complementary intangible investments — process redesign, organisational restructuring, workforce retraining, new governance and measurement systems — before the productivity shows up in the data. Productivity dips while these investments are being made (this is the bottom of the J-curve), then rises as the intangible capital starts to compound. We’ve seen the pattern with steam, electricity, computing. Same shape every time.

The honest reading of the current evidence is that we are sitting at the bottom of the J-curve for AI. Not because the technology is failing, but because the complementary investments — the design work — are mostly not being made. Companies are buying tools and hoping the redesign somehow happens on its own. It does not happen on its own. It has never happened on its own for any general-purpose technology in industrial history. Of course, with one major exception: companies that are born under AI — where the J-curve still applies but the binding constraint reorders. The design work, for them, is keeping the system legible to humans while AI does most of it; not redesigning humans’ work around the system.

The free lunch is being repriced

There’s a second piece to the productivity argument that I’ve been turning over in my head, and it is this: until very recently, AI looked like a free lunch from the buyer’s side. Subscription a few dollars per seat, generous limits, frontier capability one click away. That’s changing fast — on both sides of the market.

On the buyer’s side, the most concrete signal is Microsoft. From July 1st, list prices on most Microsoft 365 commercial suites are going up between roughly 5% and over 40% — with the high end driven by Frontline SKUs where the base increase stacks with the removal of legacy discounts — and Microsoft itself frames the increase as reflecting investments in AI features and infrastructure. Microsoft 365 Copilot stays at $30 per user per month on top — and there are now reports of 30 to 40% of Copilot licences sitting unused within the first 90 days of deployment in many enterprises. So organisations are about to pay materially more for a base productivity suite, partly to fund AI features many of their people aren’t using yet, while separately paying $30 per seat for an add-on whose own usage rate is, charitably, patchy. This is not how a free lunch is supposed to feel.

On the supplier’s side, the picture is even more interesting. OpenAI’s inference costs alone are projected to rise from around $8.4B in 2025 to $14.1B in 2026, and the company is on track to burn double-digit billions of dollars before reaching anything that resembles operational profitability. Anthropic’s path looks healthier on the unit economics, but only relatively. The market consensus, in the parts of the market that look at the actual financials, is that current API and subscription pricing is being subsidised below cost — funded by venture capital and hyperscaler cross-subsidy — and that prices will normalise upward over the next 12 to 24 months as that capital discipline tightens.

Both sides of the market point in the same direction. The thing companies have been buying as if it were cheap is becoming visibly not-cheap. And here’s the part that matters for the productivity argument: the cost is now showing up on the balance sheet before the productivity is showing up in the output. Decks talk about ROI; the actual experience inside companies, increasingly, is paying the I and waiting for the R.

What this argument doesn’t claim is that AI capability is overhyped, or that companies should slow adoption, or that the J-curve framework holds in every case. The bullish forecasts have respectable provenance: Goldman Sachs has estimated a 7% lift to global GDP over a decade, McKinsey puts the productivity opportunity at $4.4 trillion annually. There is also firm-level evidence pointing the other way to my main argument: Brynjolfsson and his collaborators have published a 14% productivity gain in customer-service operations under generative AI — a result that, if it generalises, would push Acemoglu’s macro estimate up materially. And the capability frontier is moving. The “20% of tasks exposed” parameter in Acemoglu’s calculation is a snapshot, not a constant; if the next twenty-four months produce another step change in what models can do reliably, that parameter widens and the macro number widens with it.

The honest position is therefore narrower than the headline suggests. The sceptical reading is the more defensible reading of the evidence we have. It is not the only reading consistent with the evidence we don’t yet have.

The category error that runs through the deck

There’s a confusion in the productivity discourse that I think is doing a lot of damage, and it’s one I tried to name a few years ago: the conflation of Productivity and Performance.

Productivity is a property of the system — of the Operating Model, of the way input is converted to output at the organisational level. Performance is a property of individuals and teams — how effectively they accomplish what they set out to accomplish, given the system around them.

Most of what vendor decks call “productivity gains” are, at best, individual Performance gains: a developer writing a unit test faster, an analyst getting through a first-draft memo more quickly, a customer-service agent dispatching a ticket with less typing. These gains are real. They’re also strictly local. They become organisational Productivity only if the system around them is redesigned to absorb the new constraint and reroute the freed capacity into something the organisation actually values. Without that redesign, the gain dissipates into more email, more revision cycles, more meetings about what the AI produced….

This is the move I want to keep making in this newsletter. The design layer is where Performance gains turn into Productivity gains, or fail to. The macro evidence, the firm-level evidence, the J-curve theory, and now the cost trajectory all point at the same place. The technology is not the binding constraint. The binding constraint is that nobody owns Work design as a discipline, with the seniority and the cross-functional brief required to actually redesign work around the technology — and pay back the bill that’s now arriving for the technology.

Where this is going

Next time I want to start moving from diagnosis toward capability. If “owning Work design” is the missing piece, what would it actually look like inside a real organisation? Who holds it, what authority does it need, where does it sit relative to HR, IT, Operations, Strategy? I have a working answer that I’ve been testing in practice — and I want to lay it out before the conversation drifts back into yet another argument about which copilot to deploy.

Sergio

2. Site Updates

The Laws of Organisation Design series is running again on the blog. Two additional pieces so far:

More to follow. If you find the productivity argument in this issue interesting, the Laws series is the slower, more structural companion to it.

3. Reading Suggestions

Labor market impacts of AI: A new measure and early evidence — Anthropic Economics Team.

The paper this issue circles around. The methodology section is worth reading even if you skim the rest — observed-exposure-versus-theoretical-exposure is a useful frame for any conversation about AI adoption you find yourself in this year.The Simple Macroeconomics of AI — Daron Acemoglu.

The disciplined macro argument for modest gains. Long, technical, and worth the effort. The clearest demonstration I have read of why “AI will transform the economy” and “AI will produce around half a percent of TFP over a decade” can both be true at once.The Productivity J-Curve: How Intangibles Complement General Purpose Technologies — Brynjolfsson, Rock, Syverson.

The mechanism behind the modesty. If you only read one of the three, this is the one with the most leverage for organisational practice.AI was supposed to save us time. It may be making work heavier instead. — Daniel Pink.

A short LinkedIn post, but Pink names the operational consequence that the academic papers don’t quite reach: without redesigning the work system around AI, adoption doesn’t reduce demand — it intensifies it. Brynjolfsson explains why the redesign is necessary; Pink describes what happens inside organisations where it isn’t happening. The two read well together.

4. The (un) Intentional Organisation 😁

5. Keeping in Touch

Don’t hesitate to reach out by directly hitting “reply” to this newsletter or using my blog’s contact form.

I welcome any feedback on this newsletter and the content of my articles.

Find me also on:

Post molto interessante - ti condivido anche il mio punto di vista in generale : https://ottaviopugliares.substack.com/p/tanti-mercati-tanti-lavori?r=20mmha&utm_campaign=post&utm_medium=web&triedRedirect=true